ETHICS AND REGULATIONS IN THE EMERGENCE OF PHYSICAL AI

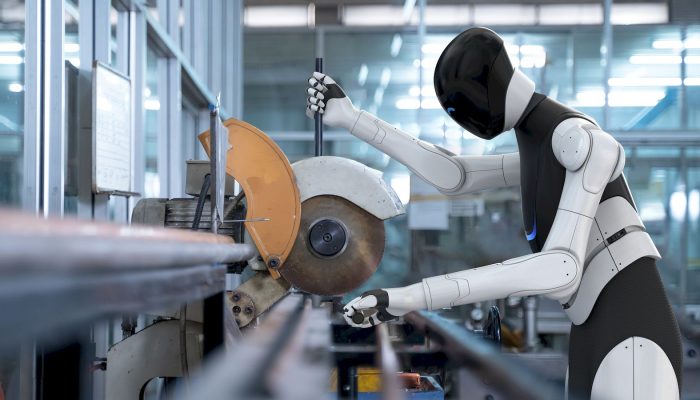

The rise of physical AI (robots & embodied systems that interact directly with the physical world) marks a move towards self-controlled machines in human environments. As amazing and wonderful companies deploy advanced humanoids, ethical and regulatory frameworks will advance as well.

Core Ethical Challenges

Safety remains paramount. Incidents involving robots mishandling tools highlight risks of physical harm. Bias in perception systems can perpetuate discrimination. Facial recognition errors can adversely affect certain groups. Privacy breaches are likely when robots equipped with cameras and microphones enter homes and workplaces. Very complex is the question of moral status: should advance humanoids capable of pain simulation or emotional display receive rights protections? And how can they be made culpable if they cause pain or damage?

Regulatory Landscape

Regulations vary across countries but are in almost all cases inadequate to deal consequences and speed of AI/AGI advancement. In some cases, there is fragmented oversight, like the case is in the USA. As is often the case in the world of technology, regulators and legislators arrive late to deal with effects they did not foresee. The EU’s AI Act (2024) requires high-risk embodied systems to be under strict conformity assessments, mandating transparency and human oversight. China’s 2025 Robotics Ethics Guidelines emphasize state supervision and “socialist core values.” Overall, international coordination is still weak. The UN’s 2023 Ljubljana Process aims to harmonize standards but lacks enforcement power.

Looking forward

As physical AI moves from warehouses to homes by 2030, policymakers face a narrow window to establish proportionate, technology-neutral regulations that protect human dignity without stifling innovation. Delayed action risks preventable harm; premature restrictions risk ceding strategic advantage. Balanced, evidence-based governance—neither laissez-faire nor prohibitive—offers the most defensible path forward.

By Dr. Keren Obara.

Marketing Associate.